Why Bolting AI onto Existing Apps Is Costing You Users, Velocity, and Market Position

The product roadmap looks confident on paper. The engineering team is capable. There is budget allocated, a stakeholder deadline in place, and a clear goal: ship a smarter mobile app. Yet months into development, the results feel underwhelming. The AI features are slow. The user experience feels stitched together. And competitors who launched later are somehow moving faster.

This is the defining problem of 2025 for mobile product teams , not a lack of AI tools, but a fundamental mismatch in how they are building. Most teams are still adding AI features to apps that were never architected to support them. That approach is not a shortcut. It is a structural liability.

The “AI-Added” Trap Most Teams Fall Into

When AI gets bolted onto an existing mobile application, the seams show quickly. Engineers spend weeks wiring inference calls into code that was never designed for real-time model outputs. Latency creeps in. State management becomes fragile. The personalization logic fights with the original architecture, and what ships is a watered-down version of the original vision.

The data reflects this. Generative AI app downloads worldwide approached 1.5 billion in 2024, a 92% increase year-over-year, and global in-app purchase revenue from AI apps nearly tripled to $1.3 billion. That growth is not coming from incumbents that patched in a chatbot. It is coming from products designed from the ground up with intelligence at the core.

The pressure on product leaders is real. Their companies have committed to AI. Nearly 66% of mid-to-large U.S. enterprises have committed to AI integration in their mobile applications, with a focus on real-time responsiveness, personalization, and predictive functionality. But commitment and execution are two very different things, and the architectural decisions made at the start determine which side of that gap a team lands on.

The problem compounds in the middle of a sprint cycle. Teams discover they need to rebuild the data pipeline, refactor the API layer, or rethink state management entirely , none of which was scoped in the original estimate. Timelines slip. Trust erodes. The AI ambition shrinks to fit what the old architecture will allow.

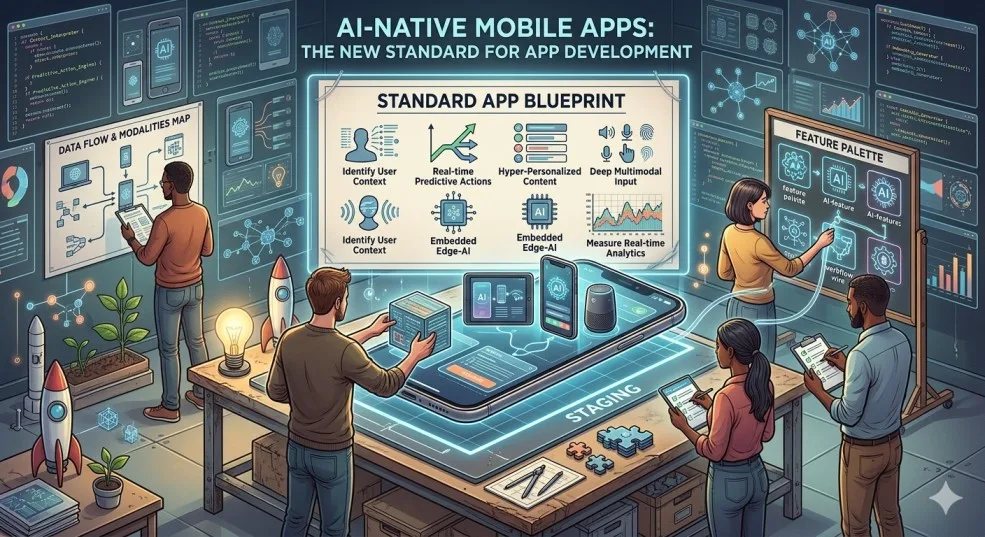

What “AI-Native” Actually Means in Practice

An AI-native mobile app is not one that uses AI. It is one where the entire technical foundation , data flows, personalization loops, model integration points, backend services , is designed with intelligence as a first-class requirement from day one.

The distinction matters enormously at the architecture level. In an AI-native app, on-device inference handles low-latency decisions locally using frameworks like TensorFlow Lite or Core ML. Cloud-based models handle heavier tasks through well-defined API contracts. User behavior continuously feeds back into the system to improve recommendations, routing, and engagement , not as a post-launch feature, but as a built-in loop. The result is an application that adapts in real time, rather than one that responds in batches or not at all.

The market for AI in mobile apps is projected to grow from $21.23 billion in 2024 to $354.09 billion by 2034, expanding at a CAGR of 32.5%. The companies capturing that growth share a common trait: they chose the right architecture before writing the first line of user-facing code.

The key technical pillars that define an AI-native build are worth naming clearly:

- Model-first data architecture , data schemas and pipelines are designed around what the models need, not retrofitted to feed them

- Modular AI services , inference logic, recommendation engines, and NLP layers are isolated as independent services that can be updated without app-store deployments

- MLOps from day one , continuous model monitoring, version control, and rollback capabilities are built into the CI/CD pipeline rather than treated as future-phase concerns

These are not aspirational features. They are the baseline for any team that intends to ship AI functionality that works reliably at scale.

How Leading Companies Are Executing This

The shift is already visible across the industry. Duolingo and Strava, two companies with mature mobile products, have both made public commitments to weaving AI into the core of their user experience , not as a premium add-on, but as the primary engine of engagement. Mobile leaders like Duolingo and Strava continue to roll out AI features to improve the user experience, with fifteen different app categories now adding AI-related terms to their product descriptions.

JPMorgan, IBM, and Amazon have each embedded AI-native design principles into their mobile product development practices, using it to drive predictive analytics, real-time risk assessment, and intelligent customer support at scale. These are not experimental deployments. They are production systems built on architectures that treat model integration as a load-bearing structural element, not a feature ticket.

On the development side, firms like GeekyAnts have built their practice specifically around this model. The Bangalore and US-based engineering firm has shifted from traditional mobile delivery toward what it describes internally as “AI-intelligence in every layer” , embedding inference, personalization, and adaptive logic into the product architecture before UI work begins. Their open-source UI library, gluestack-ui, ranked in the top two positions in the Component Libraries category of the State of React Native 2025 survey, a signal of where the developer community’s trust is sitting. In a documented client engagement, GeekyAnts reduced manual validation by 50% and accelerated testing by 30% using AI agents, RAG models, and BPM automation.

The pattern across all of these cases is consistent: the teams that are executing well made architectural decisions early that gave them room to iterate fast and ship reliably. The teams that are struggling made those decisions late, or not at all.

What Product and Engineering Leaders Need to Change Now

The gap between teams that are delivering AI-native products and those that are stuck in the “AI-added” trap is not primarily a talent gap or a budget gap. It is a planning and prioritization gap.

Downloads for Generative AI apps neared 1.7 billion in just the first half of 2025, with in-app purchase revenue reaching nearly $1.9 billion , and download growth in that period hit 67% half-over-half, the fastest since the initial surge of 2023. The window to establish a defensible AI-native product is not closing slowly.

Product leaders who want to close the execution gap should start with a hard audit of their current architecture. The question is not “where can we add AI?” but “does our current foundation support AI as a structural requirement?” If the answer is no, the right move is an architectural reset , ideally before the next major feature cycle, not after it.

Engineering leads, meanwhile, need to push for MLOps capability as a first-sprint deliverable rather than a post-launch project. Model monitoring, versioning, and automated rollback are not DevOps niceties. They are operational requirements for any app that serves AI-generated content or decisions to real users.

The competitive reality is clear: AI is no longer a niche on mobile. It has already become part of everyday mobile demand alongside social media, messaging, and video services. Users now expect their apps to anticipate, adapt, and respond intelligently. Products that cannot deliver that experience will face churn that no onboarding flow can fix.

The standard has moved. The question is whether the architecture has moved with it.

Frequently Asked Questions

What is an AI-native mobile app, and how is it different from an app with AI features?

An AI-native mobile app is built from the ground up with AI as a core architectural requirement , meaning the data pipelines, model integration points, and backend services are all designed to support intelligent behavior from day one. An app with AI features, by contrast, has AI layered onto an existing foundation. The practical difference shows up in performance, latency, personalization quality, and the team’s ability to iterate on those features without major refactoring work.

Why do AI-added apps underperform compared to AI-native ones?

When AI is bolted onto an existing architecture, engineers are forced to work around structural constraints that were never designed for real-time inference. This creates latency problems, fragile state management, and personalization logic that cannot access the data it needs. The result is AI features that feel slow or shallow , which drives churn rather than engagement.

What technologies underpin a well-built AI-native mobile app?

The core stack typically includes on-device frameworks like TensorFlow Lite or Core ML for low-latency inference, cloud-based model APIs for heavier tasks, modular service architecture for independent model updates, and MLOps tooling for continuous monitoring and versioning. Cross-platform frameworks like React Native and Flutter both offer strong support for AI library integration across iOS and Android.

How much does it cost to build an AI-native mobile app in 2025?

Costs vary significantly based on complexity. MVP-level AI-native apps typically range from $30,000 to $150,000, while enterprise-grade builds with custom generative AI components can exceed $500,000. Key cost drivers include model complexity, data infrastructure, regulatory compliance requirements, and the ongoing cost of cloud compute and model maintenance after launch.

Which industries are seeing the most impact from AI-native mobile development?

Productivity, healthcare, finance, and education are seeing the deepest integration of AI-native design patterns. Fintech companies are using it for real-time risk assessment and fraud detection. Healthcare platforms are deploying it for adaptive clinical workflows. Consumer apps in entertainment and lifestyle are using it to drive personalization at scale , which is directly correlated with retention and subscription revenue.

Add Comment